Anthropic has no ads, no consumer marketplace, and no legacy software bundle. Yet the company reached a $14 billion annualized revenue run rate in less than three years from its first dollar of revenue, earning a $380 billion valuation by early 2026. That trajectory makes it one of the fastest-scaling enterprise technology companies in history.

The paradox runs deeper. Anthropic was founded as a safety-focused research lab, not a commercial software vendor. Today, it commands infrastructure commitments exceeding $100 billion from Amazon, Google, and Microsoft — three companies that are simultaneously its partners and competitors.

In this breakdown, we'll unpack how Anthropic monetizes "reliable reasoning" at scale through token-based API pricing, a tiered subscription ladder, explosive agentic product revenue, and massive enterprise contracts. We’ll also go over the enormous cost structure required to sustain Anthropic and how it stacks up against OpenAI, Google, and Microsoft.

Table of Contents

How Anthropic works

Anthropic was founded in 2021 by a group of researchers who left OpenAI, led by siblings Dario and Daniela Amodei. The company positions itself as an "AI safety and research" company whose mission is to develop AI for the long-term benefit of humanity. It is incorporated as a Public Benefit Corporation (PBC), legally obligating it to balance stockholder interests with its safety mission.

Siblings Dario and Daniela Amodei, one of the Anthropic co-founders

The company builds and deploys the Claude model family, its flagship frontier AI system. Claude is commercialized through three primary surfaces:

consumer and prosumer chat apps (Free, Pro, and Max tiers)

business and enterprise deployments (Team and Enterprise tiers with SSO, SCIM, audit logs, and HIPAA-configurable options)

a developer API with per-token metered billing plus tool add-ons like web search and code execution

Anthropic has also launched specialized vertical products for education and healthcare.

At its core, Anthropic sells "intelligence as a utility" — metered access to reasoning, coding, and agentic capabilities via the Claude model hierarchy (Opus, Sonnet, Haiku), each tiered by capability and cost. Enterprises and developers integrate high-stakes cognitive work into their applications and workflows at predictable, usage-based pricing.

Anthropic's primary differentiator is its "Constitutional AI" training approach, which uses a written set of principles to govern model behavior through supervised and reinforcement learning. The company explicitly refuses ad-supported monetization, positioning Claude as a "trusted advisor" free from commercial bias. Additionally, the Long-Term Benefit Trust (LTBT) governance structure ensures independent oversight of the company's mission even through an IPO.

On the infrastructure side, Anthropic operates a multi-cloud distribution model. Claude is available natively on AWS Bedrock, Google Cloud Vertex AI, and Microsoft Azure Foundry, reducing procurement friction for enterprises already spending on those platforms.

The company is also transitioning from purely rented cloud compute toward vertically integrated owned infrastructure, including a $50 billion Fluidstack partnership for custom data centers in Texas and New York.

Anthropic's revenue streams

Anthropic is privately held and does not publish audited revenue figures. All figures cited are annualized "run-rate revenue" snapshots disclosed by the company or reported by credible financial outlets. The trajectory speaks for itself: approximately $87 million in early 2024, roughly $1 billion at the beginning of 2025, over $5 billion by August 2025, approaching $7 billion by October 2025, crossing $9 billion by year-end 2025, and $14 billion by February 2026.

The company's revenue is built on three documented monetization engines: model-as-a-service API revenue (70–75% of total), subscriptions and seat-based licensing (10–15% of total), and Claude Code and agentic products (approximately 18% of total, based on $2.5 billion of $14 billion). The remainder is captured through large enterprise contracts and public sector deals.

API and token-based revenue (model-as-a-service)

This is Anthropic's primary revenue engine, accounting for approximately 70–75% of total income. It is strictly consumption-based, measured in tokens — the fundamental units of text and code processing. Revenue scales in direct proportion to the complexity and volume of tasks performed by the AI.

The Claude model hierarchy creates a tiered pricing structure designed to capture different segments of the market:

Model tier | Input cost (per 1M tokens) | Output cost (per 1M tokens) | Standard context window |

Claude Opus 4.6 | $5.00 | $25.00 | 1M tokens |

Claude Sonnet 4.6 | $3.00 | $15.00 | 1M tokens |

Claude Haiku 4.5 | $1.00 | $5.00 | 200,000 tokens |

The economic logic is deliberate: migrate users from low-cost Haiku into higher-margin Sonnet and Opus as their workflows mature. Sonnet is positioned as the "balanced" model, offering strong coding and reasoning capabilities at 67% lower costs than previous flagship generations.

Extended Thinking mode adds another revenue lever. It generates "hidden" reasoning tokens charged at standard output rates, effectively increasing revenue per request by approximately 16% for complex logic outputs.

Tool and workflow monetization add further layers beyond tokens: web search at $10 per 1,000 searches and code execution at $0.05 per hour per container after a free daily allowance.

Subscriptions and seat-based licensing

Subscriptions account for approximately 10–15% of total revenue, providing a stable, recurring baseline. They also serve as a laboratory for observing user behavior and refining model capabilities.

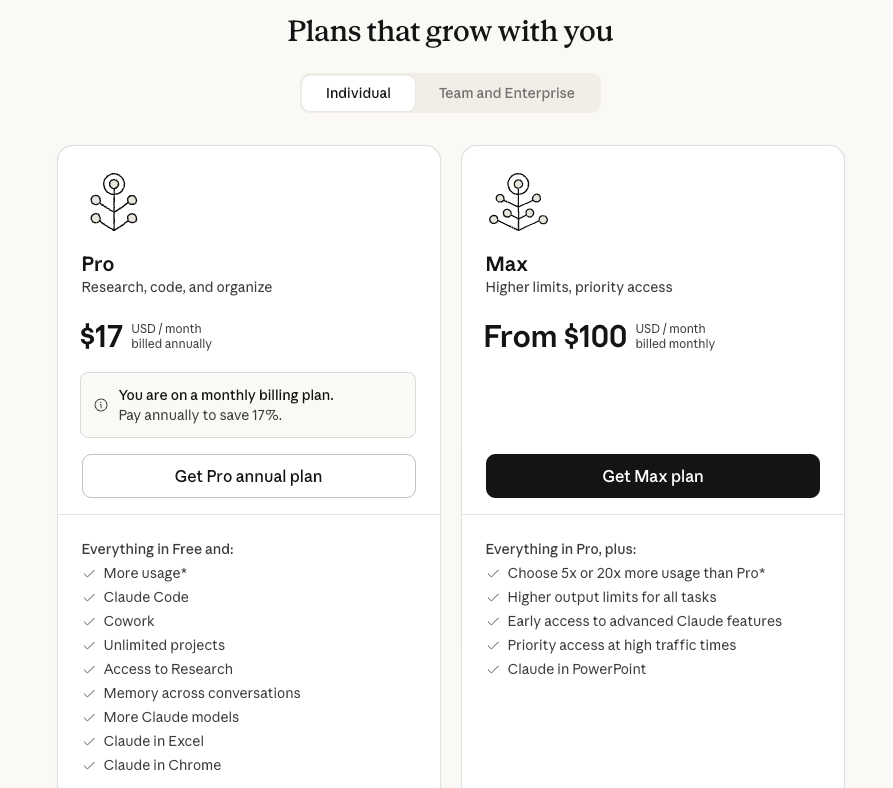

The subscription ladder spans five tiers:

Claude Free: Baseline access with limited usage

Claude Pro ($17/month annual or $20/month monthly): increase the free tier's usage limits and priority access during peak times

Claude Max ($100–$200/month): Designed for power users with 5x to 20x Pro usage levels and "Priority Service Tiers"

Claude Team ($20/seat/month billed annually, 5-seat minimum): Bridge to enterprise adoption with administrative tools and collaboration features

Claude Enterprise (custom pricing): SSO, audit logging, domain capture, and HIPAA-configurable options for regulated industries

The Max tier tells a revealing story about the economics of flat-rate AI pricing. Internal data revealed that some $200/month Max users were costing Anthropic over $50,000 per month in compute. The tier was introduced specifically to manage this cost imbalance while retaining high-value users.

Anthropic has also expanded into vertical-specific subscriptions. The Education plan offers institution-wide licensing and research tools, while Healthcare tools support clinical trials and claims processing.

Subscriptions also serve as packaging vehicles for higher-value capabilities: Cowork and Claude Code are bundled into Pro and Team tiers, using plan-based gating to pull customers up-market.

Claude Code and agentic products

Claude Code is Anthropic's fastest-growing and most publicly quantified sub-business. It is an agentic coding tool launched into general availability in May 2025 that can autonomously read codebases, edit files, run commands, and write, debug, and refactor code across entire repositories. It integrates with development tools across terminal, IDE, desktop, and browser.

The revenue trajectory has been extraordinary:

Over $500 million run-rate by September 2025

$1 billion run-rate by December 2025

Over $2.5 billion run-rate by February 2026

Enterprise use represents over half of Claude Code revenue. At $14 billion total company run-rate, Claude Code accounts for roughly 18% of total revenue.

The user economics tell an important story. Average developer spend is approximately $6 per day, with high-intensity users reaching $12–$20 per day. This far exceeds traditional SaaS developer tool ARPU and represents a fundamental shift: every developer is no longer just a "$20/month subscriber" but a high-volume token consumer.

By early 2026, approximately 4% of all public GitHub commits worldwide were authored by Claude Code, signaling a shift from "AI as a chatbot" to "AI as a production agent."

Adjacent agentic monetization is already underway. Claude Cowork extends the Claude Code workflow to non-developer knowledge work with file access, multi-step task execution, and MCP connectors. Claude Code Security targets vulnerability scanning and patch suggestions in the software delivery lifecycle.

Enterprise contracts and public sector deals

Large enterprise contracts are the fundamental driver of Anthropic's revenue scaling from $87 million to $14 billion run-rate. The company reports more than 500 customers with over $1 million in annualized spend.

The Deloitte partnership represents Anthropic's largest enterprise deployment. Claude is available to more than 470,000 Deloitte professionals globally. The alliance is structured as a multi-layered commercial agreement:

internal licensing for Deloitte's own use

a co-created certification program training 15,000 specialists on Claude

co-developed industry solutions, including "10X Analyst" for financial services

In the public sector, the State of Maryland partnership (November 2025) serves as a blueprint for state-level AI monetization. Claude automates processing of 150,000 monthly documents for caseworkers, and a Claude-powered chatbot assisted 600,000 Marylanders in accessing benefits, reducing call center volume.

Not all potential contracts are accepted. Anthropic walked away from a $200 million Department of War contract due to its refusal to allow models for autonomous weapons or mass surveillance. Anthropic was also labeled as a supply chain risk by Pentagon.

Anthropic's cost centers

Anthropic's cost structure reflects the economics of AI: revenue grows with usage and seats, but so do costs of compute for training and inference. In 2024, the company spent $1.35 billion on AWS alone, roughly 226% of its revenue. By September 2025, AWS spend climbed to $2.66 billion, consuming 104% of year-to-date revenue.

The company remains cash-flow negative despite its $14 billion revenue run rate, with 2026 projected spend of $12 billion on training and $7 billion on inference. However, internal projections show cash burn dropping to one-third of revenue in 2026 and 9% by 2027.

Compute and infrastructure (training + inference)

This is the dominant cost center by a wide margin. Anthropic has structured its three largest partnerships around guaranteed access to compute hardware and cloud capacity:

Partner | Total investment committed | Infrastructure commitment | Specialized hardware |

Amazon (AWS) | $8 billion | $75B–$105B (multi-year) | Trainium2, Inferentia |

$3 billion | Multi-year gigawatt-scale | TPU v5 (up to 1M chips) | |

Microsoft | $5 billion | $30B Azure commitment | Grace Blackwell GPUs |

These deals follow a "reciprocal capitalization" structure: hyperscalers invest billions in Anthropic, which Anthropic then commits to spend back on that hyperscaler's cloud infrastructure. This ensures guaranteed compute access during global GPU shortages and prevents any single hardware provider from constraining training capacity.

Anthropic is also shifting toward owned infrastructure via the $50 billion Fluidstack partnership. Custom data centers in Texas and New York are slated to come online in 2026. The economic logic is converting variable OPEX into amortized CAPEX:

Metric | Cloud GPU rental (H100) | Owned / partnered infrastructure |

10,000 GPU-months cost | $20M–$25M | $15M–$18M (amortized) |

Lead time | Instant | 12–24 months |

Control level | Provider-dependent | Self-determined |

At scale, that translates to estimated savings of 25–30%, which is a significant amount.

People and R&D

Anthropic employs approximately 2,500 people as of early 2026, up from 240 in early 2023, a more than 10x increase in three years.

Compensation levels are among the highest in the industry:

Role | Estimated total compensation |

Software Engineer (New Grad) | $380,000 |

Machine Learning Engineer | $417K–$878K |

Research Scientist (Interpretability) | $315K–$560K |

Strategic Account Executive | $290K–$435K |

Business Operations (Senior) | Up to $642,000 |

Anthropic also provides a 1:1 equity donation match up to 25% of the grant, reinforcing its "alignment with humanity" culture.

Safety, evaluation, and risk governance

Anthropic's Responsible Scaling Policy (RSP) is a voluntary framework to mitigate catastrophic risks. Operationalizing this requires dedicated internal safety research, model evaluations, red-teaming, incident response, and enterprise assurance processes. These costs tend to rise as models become more capable and as deployments expand into regulated sectors like healthcare, government, and financial services.

Anthropic increases safety measures for high-capability AI models

The Constitutional AI training approach itself requires additional compute and human oversight during the training process.

The Long-Term Benefit Trust governance structure — an independent body of five financially disinterested members holding "Class T" shares with growing board election power — represents an ongoing governance cost and constraint. Current members include Neil Buddy Shah (CEO, Clinton Health Access Initiative), Richard Fontaine (CEO, Center for a New American Security), and Mariano-Florentino Cuéllar (former California Supreme Court Justice and President of the Carnegie Endowment for International Peace).

Legal and regulatory costs

A major one-off but financially material example: Anthropic agreed to pay $1.5 billion to settle an authors' class-action copyright dispute over training data. This illustrates how data provenance, IP governance, and litigation risk can become massive, sudden cash costs for frontier AI companies.

Ongoing regulatory compliance costs include HIPAA-configurable offerings, data residency infrastructure, and the operational overhead of the "principled refusal" strategy — walking away from the $200 million DoD contract and forgoing "several hundred million dollars" in revenue from restricted customers.

M&A and strategic investments

Anthropic has made targeted acquisitions to accelerate its product roadmap. Vercept (February 2026) brought vision-based computer automation capabilities for "computer use" — enabling Claude to interact with software interfaces by "seeing" the screen.

Deal prices were not disclosed, but integration costs are notable: engineering attention, product sunset migrations (Vercept wound down its existing products), and security reviews.

Anthropic’s “No-Ads” Super Bowl commercial was a major success

Anthropic's "No-Ads" Super Bowl campaign represents a significant marketing investment. The "Problem Solver" ad highlighted risks of "syllogistic ads" in AI assistants and positioned Claude as a trusted, ad-free advisor.

Anthropic's competitors

Anthropic's monetization model is increasingly the standard template for frontier AI labs. The competitive differences are less about pricing structure and more about distribution channels, enterprise trust posture, agent capability maturity, and compute cost management.

A unique dynamic in Anthropic's competitive landscape is that some of its largest competitors are simultaneously its largest infrastructure partners and investors. This creates a "frenemy" relationship where each hyperscaler distributes Claude on its platform while also selling competing AI products.

OpenAI

OpenAI is Anthropic's most direct competitor, competing across both consumer and enterprise surfaces with the GPT model family and ChatGPT product line. OpenAI has enormous consumer scale with hundreds of millions of weekly active users and tens of millions of paid subscribers. It also has massive funding and infrastructure arrangements, including the reported $500 billion "Stargate" project.

The key competitive difference is positioning. OpenAI is more consumer-dominant in mindshare, while Anthropic brands itself as enterprise and developer-first. When OpenAI announced testing ads in ChatGPT for free users in early 2026, Anthropic directly attacked the move in its Super Bowl campaign as a threat to user trust.

On financial efficiency, OpenAI's projected cash burn rate is 57% of revenue for the comparable period, versus Anthropic's projected 9% by 2027. And the founding story itself is a competitive narrative: the Amodei siblings and other co-founders left OpenAI over safety disagreements, positioning Anthropic as the "principled alternative."

Google (DeepMind / Gemini)

Google is both a competitor and a distribution partner. It sells Gemini AI products integrated across its ecosystem (Search, Workspace, Android) while also distributing Claude models via Google Cloud's Vertex AI. Google invested $3 billion in Anthropic and provides access to up to 1 million TPU v5 chips for training and serving Claude models (in addition to Anthropic using Nvidia chips).

Competitive pressure comes from Google's ability to bundle AI into existing enterprise workflows and cloud procurement at massive scale. Google's capex is projected at roughly $85 billion in 2025.

Anthropic's defensive advantage is multi-cloud availability as it is not locked into Google's ecosystem. Claude AI models also boast strong performance claims in coding and agentic work.

Microsoft (Copilot / Azure)

Microsoft competes through its workplace AI suite (Copilot) and agent tooling. But it simultaneously distributes Claude models through Azure's "Foundry" environment as part of a $5 billion investment partnership with $30 billion in Azure compute commitment.

This dual role is strategically important. Microsoft sells "AI at work" through its owned productivity surface (Office 365 plus Copilot) and still monetizes via cloud and model marketplace. Anthropic benefits from access to Azure enterprise buyers and Grace Blackwell GPU infrastructure.

Microsoft's enterprise relationships and Windows ecosystem give it a distribution advantage in workplace AI. But Anthropic competes for the same "default AI coworker" slot with a differentiated safety and trust positioning.

The future of Anthropic

Anthropic projects $18 billion to $26 billion in revenue for 2026, with a 2028 target of $70 billion in revenue and $17 billion in cash flow. Cash burn is expected to drop from one-third of revenue in 2026 to 9% by 2027. The company is positioning for a 2026–2027 IPO, with the "efficiency over scale" philosophy championed by CEO Dario Amodei as a key selling point for institutional investors.

The strategic bet is that the biggest money in frontier AI will come from becoming the enterprise "operating layer" for agentic work, not from a single chat app. Cowork extends Claude Code's workflow execution model to non-developer knowledge work. Pricing shifts from "token consumption" to "labor substitution" and "workflow automation" justification. This framing unlocks much larger budgets inside enterprise buyers.

The land-and-expand enterprise motion is already visible. Anthropic reports more than 500 customers with over $1 million in annualized spend. Customers typically begin with a single use case — API, Claude Code, or "Claude for Work" — and expand across the organization. Revenue mix is expected to tilt further toward contracted enterprise subscriptions.

Policy, security, and geopolitical disputes are becoming material business constraints. Anthropic has already forgone several hundred million dollars in revenue by enforcing usage restrictions and walking away from defense contracts.

The "no-ads" strategy, Constitutional AI framework, PBC structure, and safety governance collectively create a differentiated trust brand that is difficult for competitors to replicate. This positions Anthropic as the preferred AI partner for regulated industries, risk-averse enterprises, and governments seeking civilian-focused AI deployments. Whether that trust premium translates into $70 billion in revenue and durable profitability by 2028 will depend on execution — but the monetization architecture is already in place.